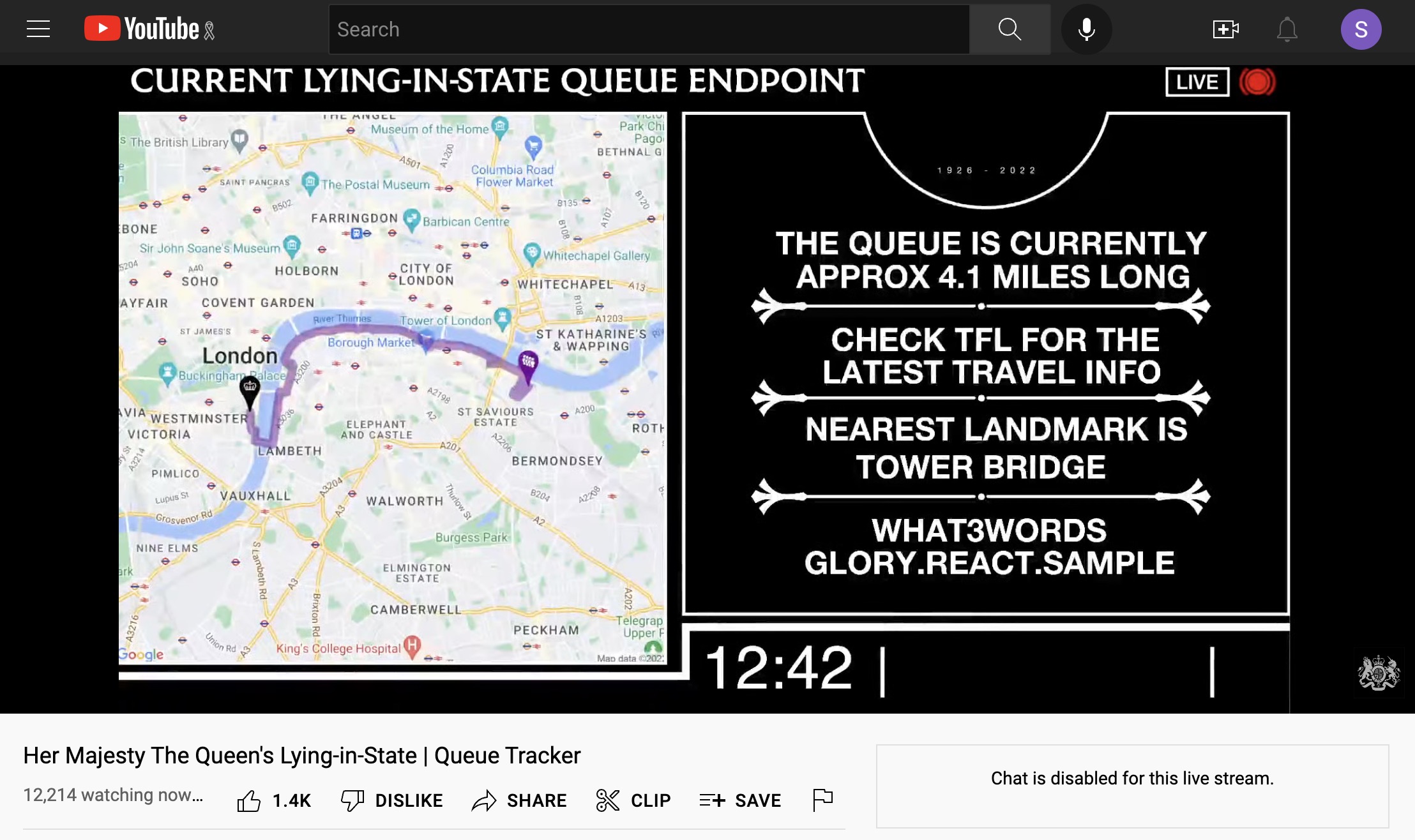

Just over a week ago, I got a call from a friend at DCMS asking for ideas on how they might help people to find the end of the queue for Her Majesty the Queen’s Lying-in-State. They had an interesting plan to livestream information to YouTube, and wanted to include some kind of live map alongside some dynamic public guidance. But how to get the data back and plot it realtime? Google (because if you’re DCMS, you just talk straight to Google…) didn’t have a ready solution since Google Maps doesn’t really have realtime pin movements. DCMS had an ingenious plan to use My Maps and shared location, with someone beaming back their location from the ground. But that would risk breaking if mobile signal dropped, or the phone battery died… and would need someone with the special phone at the back of the queue around the clock.

I thought I’d have a fiddle around in Google Maps’ Static API and see if I could come up with something more robust and less onerous that might work within their free tier.

It didn’t seem like realtime information was literally needed here – a marshall in the queue would be reporting locations every hour or so maybe, and there’s only so fast a queue will really move. So the challenge was to show the current position of the queue, styled up clearly in a way that would show up in a two-pane livestream, and refresh it whenever a new location was reported.

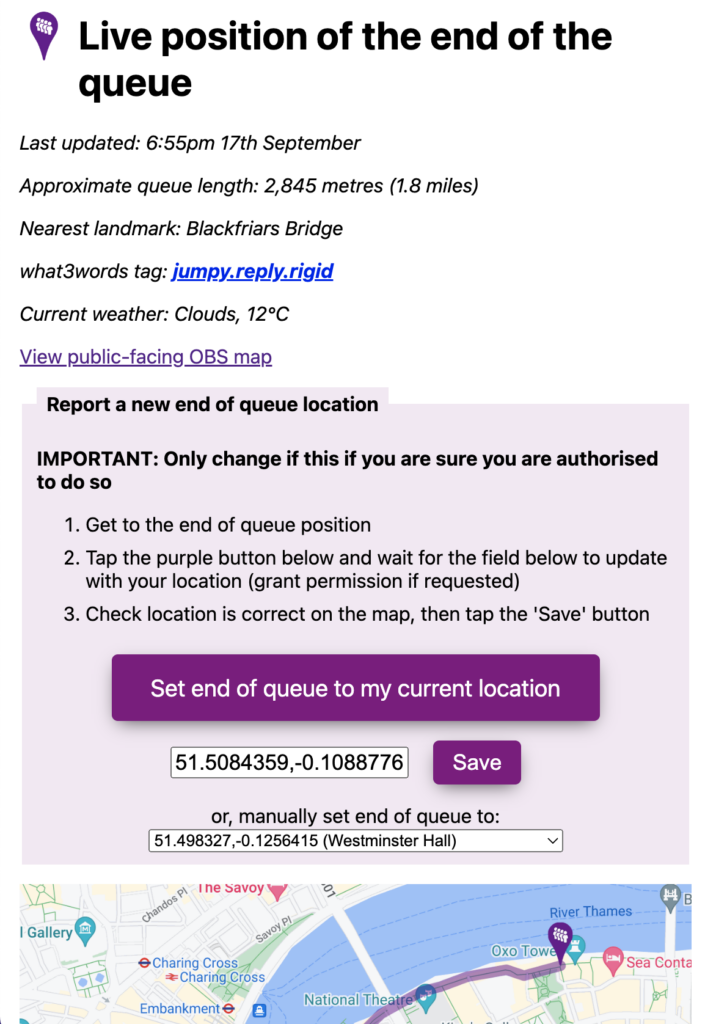

From proof of concept to launch was about 3 days. The map itself was built as a single script PHP application using a flatfile database to store the lat/lon co-ordinates of the end of the queue, and a KML file of the planned maximum queue path. A simple management app with a big purple button designed to run on a phone would be used by the marshall at the end of the queue to use the HTML geolocation API built into modern browsers to get their position, preview it on a map to check, and then update the map.

Google Maps Static API meant the map itself was pretty simple – literally just a square image – with couple of custom marker pins, and a chunky line drawn to plot the queue, drawing it out following the KML file up to the point closest to the last reported queue location. The map page would refresh every 3 minutes to show the latest queue path and end point.

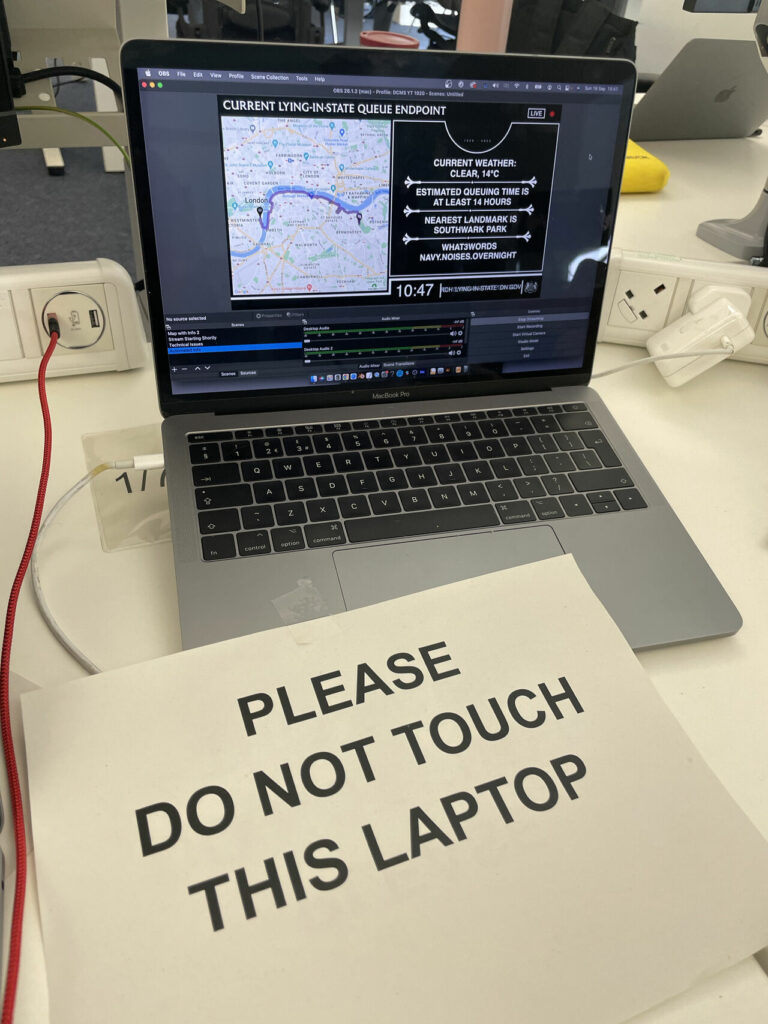

DCMS’ plan was then to stream this map via the open source OBS software, alongside a text slide which they’d keep updated with public information messages. The beauty of their plan was that YouTube became the content delivery network handling the load and reducing a lot of the security risk to the app – and they just needed a single machine in the office broadcasting the map and accompanying text.

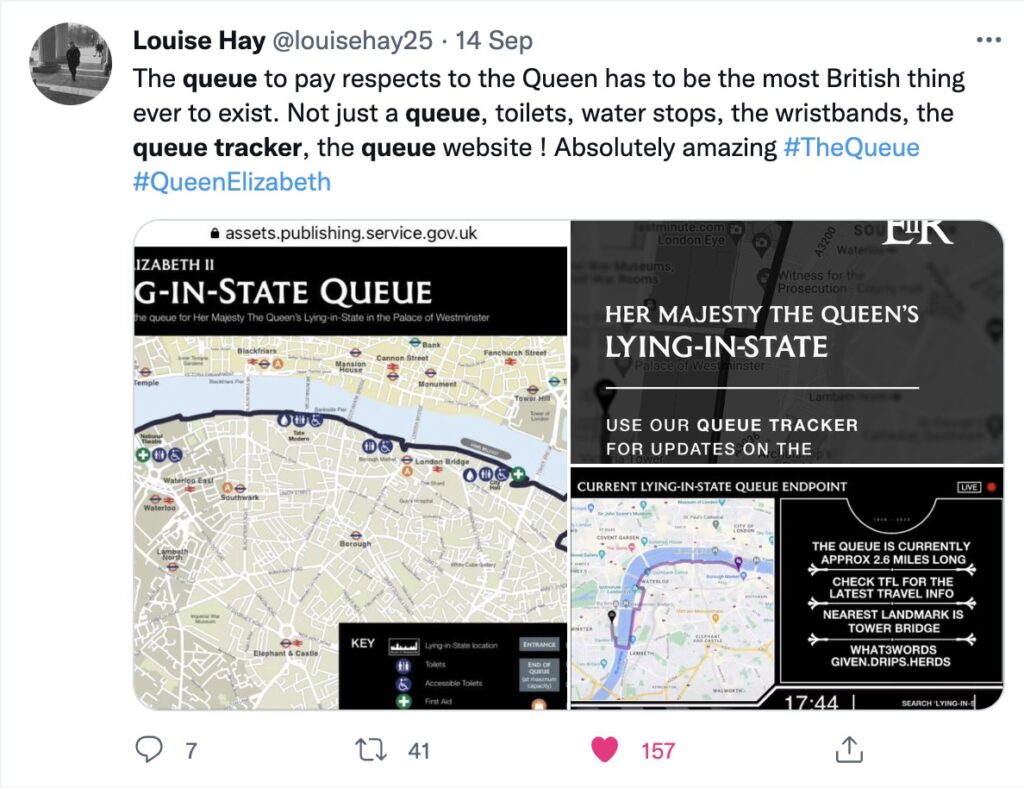

Some users understandably questioned why the information was being put out in this relatively data-intense way, but it made a lot of sense: not least because users are so familiar and comfortable with YouTube and media (not to mention GOV.UK) will happily embed YouTube videos when embedding maps or linking to external sites is trickier. Plus, it emerged from social media that people found watching the livestream of the queue tracker – a bit like the livestream of the lying-in-state itself – intrinsically settling, and a way to participate during a shared period of national mourning.

I cannot stress how much a part of the whole experience this was for those of us who couldn’t even conceive flying over to join the Queue. It added pizzaz and quirkyness, and allowed me to point to my Spanish colleagues about how seriously we are taking all of this. Thank you!

— Moof! Now mainly on Mastodon. (@Moof) September 20, 2022

Lesson 1: Automate whatever you can

The map system automated the process of updating a map, but in the first few hours social media critics started to point out human errors in the text updates being made to the livestreamed data. Behind every minor error is usually a small team doing its best under huge pressure, but in this case we figured we could automate a bit more of the process, using the what3words API (which is free and unlimited for lat/lon -> w3w lookups) to help reduce transcription errors.

A day or so into the livestream, we switched up the two panel system so the little web app was actually driving all the graphics, refreshing the map alongside a panel showing automated what3words location, checking the position of the nearest London landmark to the end of the queue by lat/lon, and introducing a mini content management system to manage text updates within the frame. This meant the system needed less manual intervention from a sleep-deprived team.

Lesson 2: …but incorporate manual overrides

Reporting the end of the queue location from a marshall on the ground worked great in testing… on personal phones with location enabled. When reality bit in the first couple of hours and a marshall tried reporting location from an official device (which had geolocation disabled), things didn’t work as planned. Thankfully I’d incorporated enough manual slack in the little app that someone in the office could override the co-ordinates, or fix the queue end point to a known point on the planned queue path.

Lesson 3: Think a step ahead

If I’d thought a bit more about what the team might need as the queue predictably hit maximum length, things might have been a bit less of a scramble at times and deploying code changes to production with very light testing might have been less intimidating (deploying code being livestreamed to 12k viewers and the full glare of Twitter and global media is a special feeling of dread!). Essentially, we ended up constructing a mini-CMS on the fly – for instance, bits of data that started as a simple flatfile text database, had to be migrated into JSON when I realised we would need to store settings alongside strings.

Lesson 4: Keep logs

One good decision I made early on was to log updates to a human-readable log file. That helped us quickly sort out problems with updating locations, but also extract a nice timestamped dataset of how the queue location changed over time, plus an audit trail if needed showing how and when messages were updated. Having that logfile gave me important peace of mind when deploying such a fast-changing app.

In what became a bit of a whirlwind week, it was a privilege to be able to support the creative, ambitious and dedicated team at DCMS delivering what turned out to be a fast-moving digital service with even bigger global reach than we’d imagined. Huge credit to Andrew Simpson, Stuart Livesey, Joe Folan, Lucy Foster and Andy Dangerfield for their vision and hard work pulling everything together, and navigating hurdles I can only imagine.

I didn’t get to The Queue myself, and as an ex-civil servant still working out what I do as a postbureaucrat, I think on a personal level working on the Queue Tracker has been my contribution to saying thank you and goodbye to the Queen.

In many ways, it was indeed the most bonkersly British bit of internet ever invented.

Comments

Do we know why DCMS decided to promote the commercial, and somewhat controversial, what 3 words app on such a somber occasion. Advertising on an office feed relating to the funeral? Was there a tender to consider location apps, like Ordnance Survey (crown copyright) OS Maps app and grid reference, or Google Plus codes, or locus plus codes, or even fourkingmaps, from which What 3 words was chosen somehow? Did What 3 words pay for the advertising on this feed? (consider this an FoI request please).

This was super useful for everyone I spoke to as a marshal. I would suggest that the accessible queue also has live updates though as the flat text on the website was misunderstood and often out of date for those people .

Great piece of work altogether though!

(a) no idea

(b) you can’t FOI me, I’m just a random bloke

Next time just use SQLite, it’s just a file.

Any plans to release the source code? And do you know if “lessons have been learnt” and a queuing system is planned and prepared for the next time? (which admittedly could be 20+ years away)

Deeply frustrating to see the terrible What3Words being promoted so highly. The right call would have been to Ordnance Survey, or plus codes, or just regular lat and long. Why on earth was this chosen?

‘@RevK – FOI requests also need a valid address for correspondence and a full, real name…

but anyway, as pointed out, this is just a random bloke, not a public authority

I dunno. What use would there have been putting up 51.5054564,-0.0775452 on a livestream video though? At least ‘slip.chill.banks’ as a what3words handle gives a regular person a chance of finding Tower Bridge if they want to look it up. But, tbh I’m agnostic about the pros and cons of w3w.

No plans, this was a voluntary effort and the code is pretty scrappy (as the blog post suggests) and so niche I’m not sure it’s worth tidying. I’m sure DCMS and Cabinet Office will be doing some kind of retrospective but these are just my reflections as a random bloke who got involved.

Thank you very much for this insight, and well done!

It looks like you relied on Twitter to provide the information in textual accessible to screenreaders format?

Perhaps the YouTube API could have been used to automatically update the description so the information was there in text format too.

Interesting idea. I had quite a narrow involvement in the whole thing, but I know the team were using the graphics and adding ALT text to tweets to provide screenreadable text alternatives. The dynamic description updates for YouTube sounds like something worth exploring.

of course this was you. thank you so much for bringing a creative idea that makes something devilishly complex and human into something simple and human.

Absolutely incredible. Thanks so much for sharing this. I had assumed the whole thing was long in the planning as part of all the prep that’s been happening over the years. I’m both amazed it wasn’t, and amazed at what a great job was achieved without the planning.

Thanks. I didn’t mean to imply that DCMS didn’t have a well-organised plan: they totally did. Their plan would have worked fine I’m sure, but I’m glad they kept trying to improve the solution and took a punt on my scheme in the end.

I think the person got the idea from Flightradar24 when their site got hammered over the coffin flight a few days earlier. I can see the benefits however I hope it doesn’t catch on past these 2 instances.